I wrote about Bulletproofs / inner product arguments (IPA) here in the past, but let me try again. The Bulletproofs protocol allows you to produce these zero-knowledge proofs based only on some discrete logarithm assumption and without a trusted setup. This essentially means no pairing (like KZG/Groth16) but a scheme more involved than just using hash functions (like STARKs). This protocol has been used for rangeproofs by Monero, and as a polynomial commitment scheme in proof systems like Kimchi (the one at the core of the Mina protocol), so it’s quite versatile and deployed in the real world.

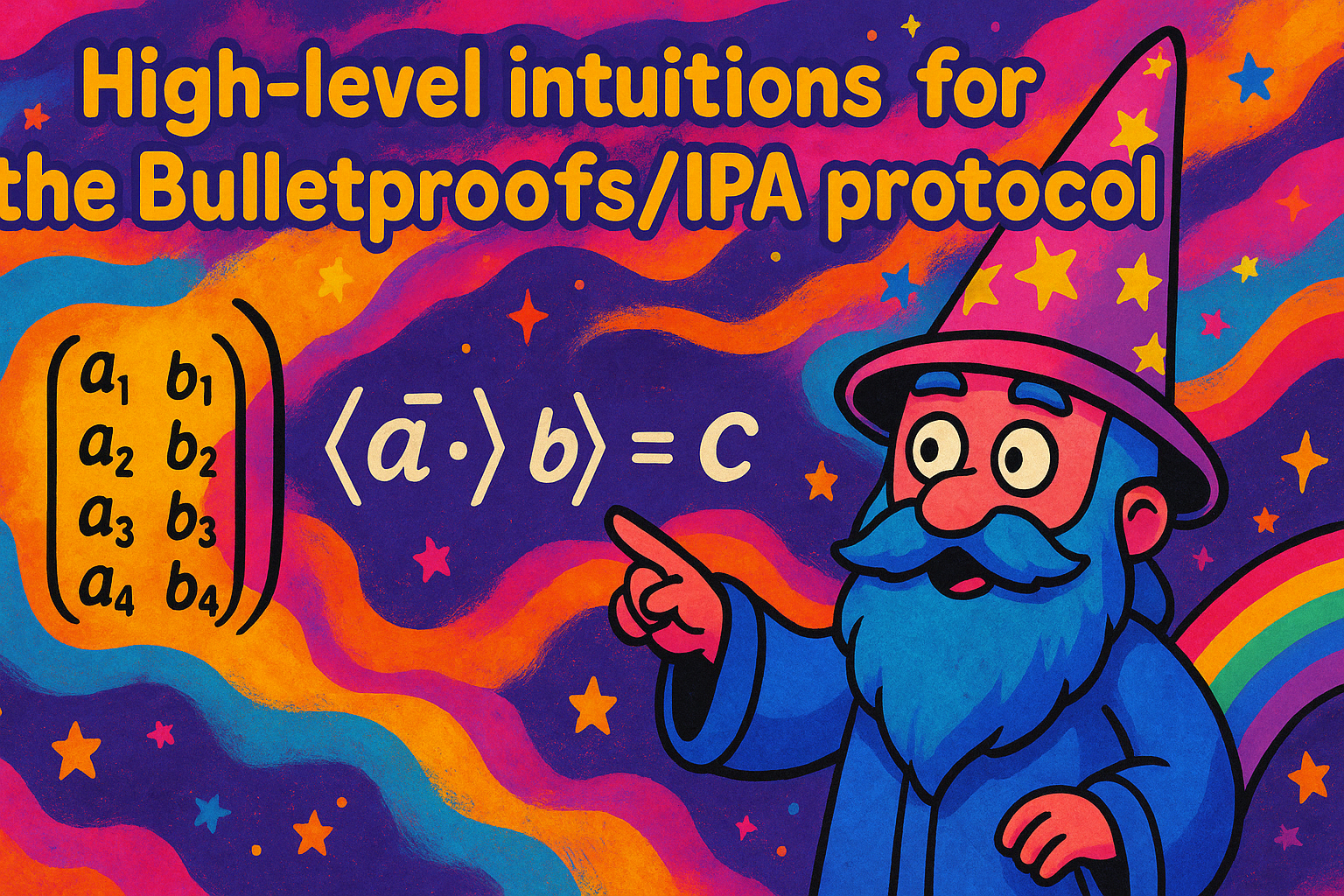

The easiest way to introduce the protocol, I believe, is to explain that it’s just a protocol to compute an inner product in a verifiable way:

If you don’t know what an inner product is, imagine the following example:

Using Bulletproofs makes it faster to verify a proof that than computing it yourself. But more than that, you can try to hide some of the inputs, or the output, to obtain interesting ZK protocols.

Furthermore, computing an inner product doesn’t sound that sexy by itself, but you can imagine that this is used to do actual useful things like proving that a value lies within a given range (a range proof, as I explained in my previous post), or even that a circuit was executed correctly. But this is out of scope for this explanation :)

Alright, enough intro, let’s get started. Bulletproofs and its variants always “compress” the proof by hiding everything in commitments, such that you have one single point that represents each input/output:

- A =

- B =

- C =

where you can see each point as a non-hiding Pedersen commitment with independent bases (so the above calculations are multi-scalar multiplications). To drive the point home, let me repeat: single points instead of long vectors make proofs shorter!

Because we like examples, let me just give you the commitment of :

Different protocols (like the halo one I talk about in the first post) since bootleproof (the paper that came before bulletproofs) aggregates commitments differently. In the explanation above I didn’t aggregate anything, but you can imagine that you could make things even smaller by having a single commitment to the inputs/output.

At this point, a prover can just reveal both inputs and the verifier can check that they are valid openings of , and (or to if you aggregated all three commitments). But this is not very efficient (you have to perform the inner product as the verifier) and it also is not very compact (you have to send the long vectors and ). I know it’s also not zero-knowledge, but we will just explain Bulletproofs/IPA without hiding, and for the hiding part we’ll just ignore it as we usually do in such ZKP schemes.

The prover will eventually send the two input vectors by the way, but before doing that they will reduce the problem statement to a much smaller where the vectors and both have a single entry. If the original vectors were of size then Bulletproofs will perform reductions in order to get that final statement (as each reduction halves the size of the vectors), and then will send these “reduced” input vectors (for the verifier to perform the same check as before).

For us, this means that there are two things to understand next:

- how does the reduction work?

- how is it verifiable?

To reduce stuff, we do the same basic operation we’re doing in every “folding” protocol: we pick a challenge and we multiply it with one half, then add it to the other half. Except that here, because we’re dealing with a much harder algebraic structure to work with (these Pedersen commitments, which as I pointed in this post, are basically random linear combinations of what you’re committing to, hidden in the exponent), we’ll have to also use the inverse .

Here’s how we’ll fold our inputs:

Then you get two new vectors of half the size. This means nothing much so far, so let’s look at what their inner product looks like:

for some cross terms and that are independent of the challenge chosen (so when we will Fiat-Shamir this protocol, the prover will need to produce and before sampling ).

Wow, did you notice? The new inner product depends on the old one. This means that as a verifier, you can produce the reduced inner product result in a verifiable way by computing

If what you have is a commitment , then you can produce a reduced commitment where stuff is essentially provided by the prover, and is not going to mess with because of the challenge that’s shifting/randomizing that garbage (it’ll look like where and are commitments to and ).

So we tackled the question of how do we reduce, in a verifiable way, the result of the inner product. But what about the inputs?

Of course, you can do the same for the commitment of and ! So essentially, you get (and similarly for ).

We can go over it in a quick example, but it’ll pretty much look the same as we did above except that we also have to reduce the generators for the first input (and for the second input).

The first thing we’ll do is reduce the generators:

then we will look at what a Pedersen commitment of our reduced first input looks like:

In other words, (and similarly for the second input).

In the Bulletproofs protocol, we’re dealing with a single commitment and so we’ll reduce that statement to where stuff contains the aggregated and for all of the separate commitments.

So just a recap, this is what you’re essentially doing with this first round of the protocol:

- we start from

- the prover produces the points and

- the verifier samples a challenge

- they both produce

at this point the prover can choose to release and and the verifier can check that this is a valid opening of by comparing it with (so the verifier needs to produce the reduced bases and as well).

You can imagine that in Bulletproofs, they don’t stop there, they just notice that this looks like another statement that you could reduce as well, so you do that until your reduced inputs are both of size 1.

Notice that we computed which is important because you want to make sure that the inner product result is indeed and not some arbitrary value. Checking this in the reduced form tells you with high probability that this is true as well in the original statement .

Anyway, that’s it, hopefully that adds some colors to what Bulletproofs look like. In real-world implementation, the reductions are not checked one by one, instead an optimized check aggregates all of them.